We’re all familiar with increasing rates of data growth, and practically every organization using Microsoft 365 has an ever-growing tenant which is accumulating Teams, sites, documents and other files by the day. The majority of organizations aren’t making the most of this data though – in the worst cases it could just be another digital landfill, but even in the best case the content grows but doesn’t have much around it in terms of intelligent processing or content services. As a result, huge swathes of content go unnoticed, search gives a poor experience and employees struggle to find what they’re looking for. This is significant given that McKinsey believe the average knowledge worker spends nearly 20% of their time looking for internal information or tracking down colleagues who can help with specific tasks. At the macro level, this can accumulate to a big drag on organizational productivity – valuable insights are missed, time is lost to searching, and opportunities pass by untapped.

In this post, I propose five ways AI can help you get more value from your data.

1. Use AI to add tags and descriptions to your images so they can be searched

Most organizations have a lot of images - perhaps related to products, events, marketing assets, content for intranet news articles, or perhaps captured by a mobile app or Power Apps solution. The problem with images, of course, is that they cannot be searched easily - if you go looking for a particular image within your intranet or digital workplace, chances are you'll be opening a lot of them to see if it's what you want. Images are rarely tagged, and most often are stored in standard document libraries in Microsoft 365 which don't provide a gallery view.

A significant step forward is to use image recognition to automatically add tags and descriptions to your pictures in Microsoft 365. Now, the search engine can do a far better job of returning images - users can enter search terms and perform textual searches rather than relying on your eyeballs and lots of clicks. Certainly, the AI may not autotag your images perfectly - but the chances are that some tagging is better than none. Here are some examples I've used in previous articles to illustrate what you can expect from the Vision API in Azure Cognitive Services:

Image |

Result |

|

|

|

Image |

Result |

|

|

Image |

Result |

|

2. Use AI to extract entities, key phrases and sentiment from your documents

We live in a world of documents, and in the Microsoft 365 world this generally means many Teams and SharePoint sites full of documents, usually with minimal tagging or metadata. Nobody wants the friction of manually tagging every document they save, and even a well-managed DMS may only provide this in targeted areas. Auto-tagging products have existed for a while but have historically provided poor ROI due to expensive price tags and ineffective algorithms. As a result, the act of searching for information typically involves opening several documents and skimming before finding the details you're looking for.

What if we could extract key phrases from documents and known entities (organizations, products, people, concepts etc.) and highlight them next to the title so that the contents are clearer before opening? Technology has moved on, and Azure's Text Analytics API is far superior to the products of the past. In my simple implementation below I'm simply sending each document in a SharePoint library to the API and then storing the resulting key phrases and entities as metadata. I also grab the sentiment score of the document as a bonus:

A more advanced implementation might provide links to more information on entities that have been recognized in the document. The Text Analytics API has a very nice capability here - if an entity is recognized that has a page on Wikipedia (e.g. an organization, location, concept, well-known person etc.), the service will detect this and the response data for the item will include a link to the Wikipedia page:

3. Create searchable transcripts for old calls, meetings and webinars with speech-to-text AI

If your company uses Microsoft 365, then Stream is already capable of advanced speech-to-text processing - specifically, the capability which automatically generates a transcript of the spoken audio within a video. This is extremely powerful for recording that important demo or Teams call for others to view later of course. However, not every organization is using Stream - or perhaps there are other reasons why some existing audio or video files shouldn't be published there.

In any case, many organizations do have lots of this type of content lying around, perhaps from webinars, meetings or old Skype calls. Needless to say, all this voice content is not searchable in any way - so any valuable discussion won't be surfaced when others look for answers with the search engine. That's a huge shame because the spoken insights might be every bit as valuable as those recorded in documents.

Speech-only content comes with other baggage too. As just one example, it may be difficult to consume and understand for anyone whose first language is different from the speaker(s).

The Azure Speech Service allows us to perform many operations such as:

- Speech-to-text

- Text-to-speech

- Speech translation

- Intent recognition

More advanced scenarios also include call recording, full conversation transcription, real-time translation and more. In the call center world, products such as Audiocodes and Genesys have been popular and are increasingly integrated with Azure's advanced speech capabilities - indeed, Azure has dedicated real-time call center capabilities these days.

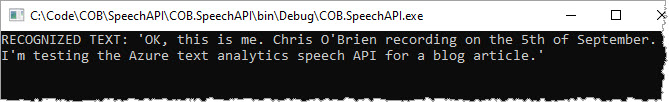

At the simpler end though, if your company does have lots of spoken content which would benefit from transcription you can do this without too much effort. I wrote some sample code against the API and tested a short recording I made with the PC microphone - I don't need to tell you what I said because the API picked it up almost verbatim:

4. Use AI to translate documents

5. Extract information from documents such as invoices, contracts and more

- Forms - extract table data or key/value pairs by training with your forms

- Receipts and business cards - use pre-built models from Microsoft for these

- Extract known locations from a document layout - extract text or tables from specific locations in a document (including handwriting), achieved by highlighting the target areas when training the model

Conclusion

- Analyze call transcripts for sentiment (run a speech-to-text translation and then derive sentiment) and provide a Power BI report

- Perform image recognition from security cameras and send a push notification or post to a Microsoft Team if something specific is detected

- Auto-translate the transcript of a CEO speech or townhall event and publish on a regional intranet